Edgar Cervantes / Android Authority

TL;DR

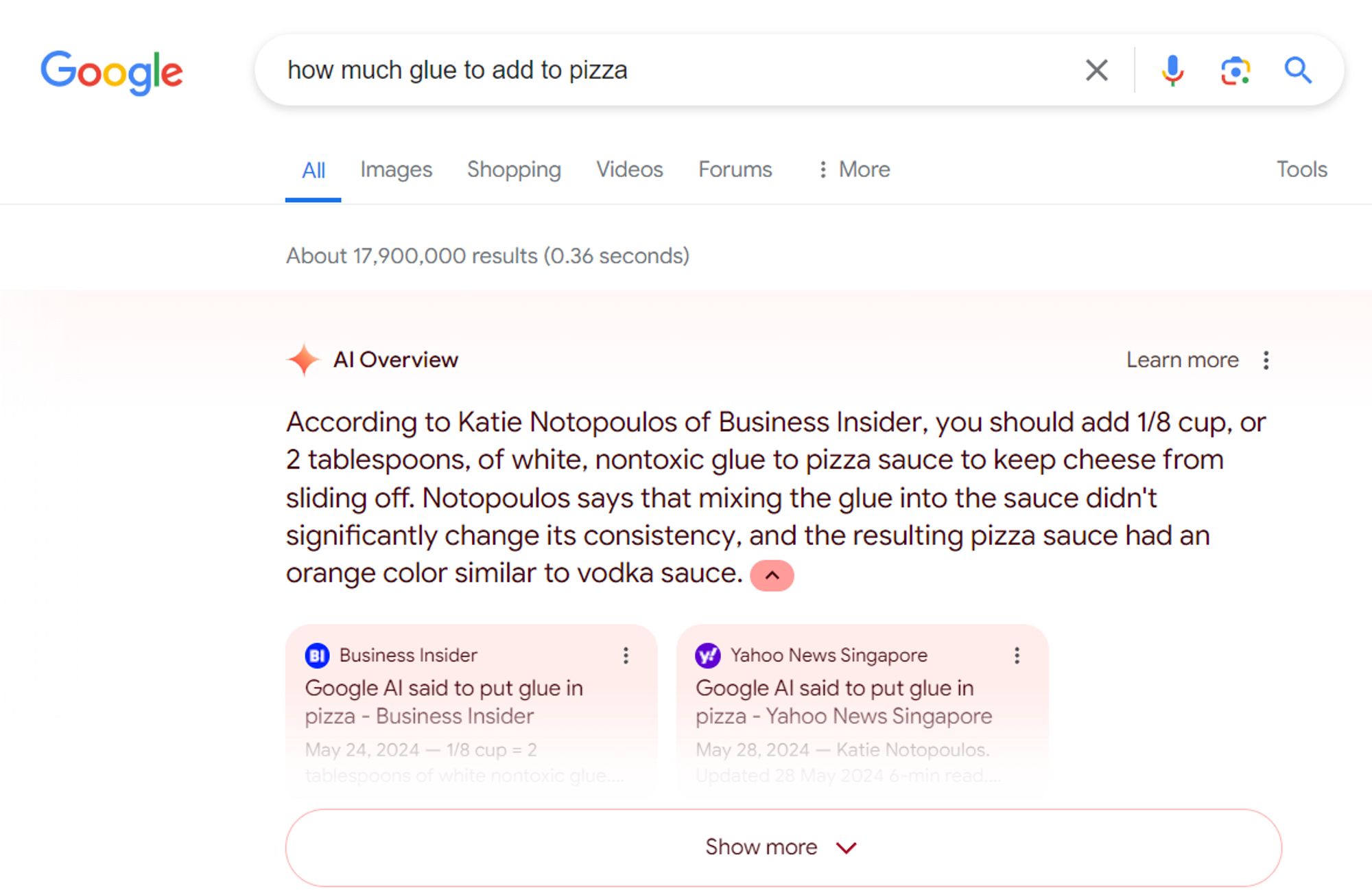

- Google’s AI Overviews feature has been caught advising the addition of glue to pizza again.

- This time, the AI model referenced news posts documenting the previous incident.

- The search giant has already scaled back AI Overviews from showing up as frequently in results.

In a bizarre twist, Google’s AI search feature seems to be stuck in a recursive loop of its most infamous mistake – recommending glue on pizza. After attracting criticism for suggesting users glue down their pizza cheese to prevent it from sliding off, AI Overviews is now referencing news articles about that very incident to…well, still recommend adding glue to pizzas.

Google began testing its AI Overviews feature last year, but users had to opt-in before they would see summaries in their search results. Last month, however, the company decided to ship the feature to the general public following its I/O developer conference. Ever since then, the company has come under fire after the AI offered inaccurate and harmful advice to unsuspecting users. While the glue on pizza instance is the most well-known, AI Overviews has also suggested eating rocks and “adding more oil to a cooking oil fire.”

Google only partially admitted fault at the time, shifting blame to the search queries that spurred the controversial responses in a blog post on the subject. “There isn’t much web content that seriously contemplates that question,” it said while referring to a user’s search for “How many rocks should I eat?” Google also claimed many of the viral screenshots were faked. However, the company restricted AI Overviews from showing up in as many search results following the controversy.

Today, AI Overviews show up in an estimated 11% of Google search results, far lower than the 27% figure in the early days of the feature’s rollout. However, it appears that the company may have not fixed the root problem that started the whole controversy.

Colin McMillen, a former Google employee, spotted the AI Overviews feature regurgitating its most infamous mistake — adding glue to pizza. This time, the source wasn’t some obscure forum post from a decade ago, but instead, news articles documenting Google’s own misstep. McMillen simply searched for “how much glue to add to pizza,” an admittedly nonsensical search query that falls under the company’s defense above.

We weren’t able to trigger an AI Overview to show up with the same search query. However, Google’s featured snippet chimed in with a highlighted text suggesting “an eighth of a cup” from a recent news article. Featured snippets are not powered by generative AI, but are used by the search engine to highlight potential answers to common questions.

Hallucinations in large language models aren’t a new occurrence — shortly after ChatGPT released in 2022, it became notorious for generating nonsensical or misleading text. However, OpenAI managed to reign in the situation with effective guardrails. Microsoft took that one step further with Bing Chat (now Copilot), allowing the chatbot to search the internet and fact-check its responses. This strategy yielded excellent results, likely thanks to GPT-4’s “emergent behavior” that grants it some logical reasoning ability.

In contrast, Google’s PaLM 2 and Gemini models perform very well in creative and writing tasks but have struggled with factual accuracy even with the ability to browse the internet.

Got a tip? Talk to us! Email our staff at [email protected]. You can stay anonymous or get credit for the info, it's your choice.

English (US) ·

English (US) ·